Complacency: A Useful Concept in Safety Investigations?

There is a fine line between confidence (from the Latin confidentia ‘to have full trust’) and over-confidence. Daniel Kahneman contends that of all the various flaws that bedevil decision-making, over-confidence is the most damaging. While an etymologist may argue ‘complacency’ (from the Latin complacentia ‘to please’) is more about smugness, it is often used in a similar way to over-confidence.

But is complacency just another convenient ‘label‘ for impatient investigators to apply? Is is not by its nature (unlike confidence) an unhelpfully negative concept? Moray & Inagaki (2000) say:

To claim that behaviour is complacent is to blame the operator for failure to detect signals. This is undesirable, since so-called complacent behaviour may rather be the fault of poor systems design.

Indeed is complacency only about signal detection? Is it just about front-line operators?

We look at a paper that examined complacency, proposed a different way of framing the concept and how one accident investigation agency choose to recommend tackling it.

A Theory of Complacency

In a paper presented at the 2012 IChemE Hazards 23 conference Gemma Innes-Jones of LR Scandpower wrote:

Complacency is one aspect of operator behaviour that is often cited as a major contributing factor to accidents…

In everyday usage complacency is often used to suggest wilful and ill-advised neglect on the part of an individual.

Some of the common symptoms suggested in accident reports include ignoring warning signs, over confidence, assuming the risk decreases over time, neglecting safety procedures, becoming satisfied with the status quo, the erosion of the desire to remain proficient and accepting lower standards of performance.

Complacency has also been defined in relation to boredom, overreliance, overconfidence, contentment, a low index of suspicion, workload and resource allocation, trust in automation and attention [see paper for citations].

An earlier paradoxical definition was that (emphasis added):

Complacency is caused by the very things that should prevent accidents, factors like experience, training and knowledge contribute to complacency. Complacency makes crews skip hurriedly through checklists, fail to monitor instruments closely or utilize all navigational aids. It can cause a crew to use shortcuts and poor judgement and to resort to other malpractices that mean the difference between hazardous performance and professional performance.

Wiener, E.L. (1981). Complacency: is the term useful for air safety? 26th Corporate Aviation Safety Seminar, Flight Safety Foundation

Examples:

- Kern highlights one example of what he calls ‘creeping complacency’ were an EMB-120 Brasilia pilot who was a ‘stand-out aviator’, flying a routine route, responded to a cabin crew request and doubled the rate of climb, causing a near fatal accident.

- The NTSB identified complacency in a police helicopter accident, where a demanding night flight was commenced and opportunities to land as conditions deteriorated were ignored.

- The NBAA mentioned complacency in a report that highlighted that FDM data showed a low compliance with pre-take off flight control checks amongst business aviation pilots.

- UK AAIB quote a pilot commenting on complacency in his miscalculation of A320 take off performance at his home base.

- A First Officer commented on the ease of becoming complacent during repetitive, frequent operations in an ATSB report.

- A Service Inquiry into the death of a soldier whilst sports parachuting commented on an organisationally “complacent approach to safety, training and parachute operations”.

In some cases complacency is used as a term but not defined. For example, Gareth Lock has pointed out that the Human Factors Analysis and Classification System (HFACS) taxonomy “lists it as part of adverse mental states but don’t define what it means”.

Innes-Jones observes that past definitions defined complacency in relation to other constructs rather than as a singular behaviour and their is little scientific consensus on what complacency actually is and how it develops. Her paper says that the past research…

…suggests that complacency effects are most likely to occur when operators interact with automated systems that are perceived to be highly reliable and are escalated in situations with high task demands.

[Where]…the operator weighs the perceived benefits of reduced monitoring behaviour (lower workload) against the perceived cost (risk of an automation failure).

Also:

Operator experience increases familiarity with the system and improves their skills and ability to resolve emerging problems. Experience may have also taught them about the consequences of certain actions and the limits to which they can push the system. This may lower the perceived risk, leading to people feeling safer about the situation, and more and greater risks may be taken (Hunter, 2006).

However:

Much of the empirical research into complacency has focused on the performance of an operator while monitoring an automated system and refers to the ensuing decline of that monitoring performance.

A Recommendation: Complacency as Risk Perception and Tolerance Behaviour

Innes-Jones recommends (emphasis added):

Rather than applying an ill-defined label such as complacency, it would be better to frame this behaviour in terms of the perception of, and tolerance to, risk, and how these influence an individual’s decision to behave or act in a certain way.

These concepts are readily understood, more grounded in theory and offer the opportunity to develop individual and collective strategies to mitigate its effects.

One explanation for behaviour that leads to an undesirable event is that the individual did not perceive the risk inherent in the situation, and consequently did not undertake actions to avoid or mitigate the risk.

Another explanation is that while an individual may correctly perceive the risks involved in a situation, they may choose to continue if they perceive the risk is not sufficiently threatening. Those individuals would be described as having a greater tolerance or acceptance of risk, compared to others

She gives one example, working at height, where the risk may be downplayed excessively because fall protection is in place. The use of other protection and warning systems may similarly give an excessive sense of security.

Arguably this approach also works at the organisational level too.

Complacency as Risk Perception and Tolerance in Practice

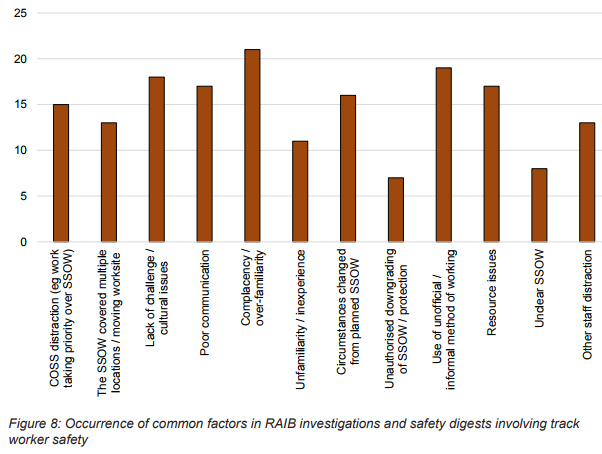

The UK Rail Accident Investigation Branch (RAIB) considered Gemma Innes-Jones’s approach in a report into the safety of track workers working on Network Rail’s infrastructure “outside possessions of the line” (i.e. where normal running of trains continued during engineering work).

Simon French, Chief Inspector of Rail Accidents said:

The last fatality as a result of a track worker being struck by a train occurred in 2014; there have been six such fatalities over the last ten years. However, in our recent annual reports the RAIB has expressed a concern about the number and severity of serious ‘near miss’ incidents, some of which have included the potential to result in multiple fatalities. By way of example, during 2015 we identified 71 incidents in which track workers working outside a possession on Network Rail infrastructure were at risk of being struck by moving trains.

Our analysis has shown that, in more than half of the incidents, circumstances on site had changed from those envisaged by the pre-planned safe system of work.

Our analysis has also found that the behaviour and attitudes of track workers, including those with responsibilities for leading safety, are major factors…

Given that behavioural and cultural issues can lead to breakdowns in site discipline or loss of vigilance, the RAIB considers that the industry should reinvigorate the training it provides to track workers in the ‘non-technical skills’ needed to work safely on the railway (i.e. generic skills such as the ability to take information, focus on the task, make effective decisions, and communicate clearly with others).

In their analysis of analysis of the ten near misses examined from 2015 on, RAIB observe that:

Five of the incidents…that were examined as part of this investigation might have been prevented by a more appropriate perception of the risks on the part of the COSS [Controller of Site Safety] or the signallers involved.

Further more, when they examine track worker accidents and incidents back to 2005, ‘complacency and over familiarity’ was the most common classification factor, occurring in 75%.

Now at this point we must observe that this data is dependent on the accurate and consistent use of the taxonomy and we must consider the possibility that ‘complacency’ was being used as a ‘convenient label’. However the RAIB do publish summaries in the main body of the report for the recent near-misses (along with coding in Appendix G) and in Appendix M for the others for readers to examine.

So in the case of a near miss close to Bessacarr, Doncaster, 14 May 2015:

All of the team agreed that complacency was a factor in the incident. They had earlier walked back from the site of work without a line blockage [i.e. with trains running] and without incident, so they assumed there would be no problem in walking back after lunch without taking a line blockage.

This fits with Innes-Jones concept in that prior success encouraged repeat behaviour despite the actual risk.

In their recommendations, RAIB Recommendation 2 addresses complacency (among other issues):

The intent of this recommendation is to improve the non-technical skills of track workers.

Network Rail should review the effectiveness of its existing arrangements for developing the leadership, people management and risk perception abilities of staff who lead work on the track, as well as the ability of other staff to effectively challenge unsafe decisions. This review should take account of any proposed revisions to the arrangements for the safety of people working on or near the line. A time-bound plan should be prepared for any improvements to the training in non-technical skills identified by the review. [Emphasis added]

Our Comments

Innes-Jones’ proposal has the novel benefit of both widening the scope from simply monitoring automated systems AND narrowing the focus to behaviours related to risk assessment and decision making. This certainly helped one accident investigation body propose credible recommendations, similar to the concept of chronic unease.

Another advantage of the proposal is that it also easier to consider if organisations themselves have become immune to the risks they phase. This helps with both the incubation concept in Turner’s Man-Made Disaster Theory and Reason’s Organizational Accident Concept.

A shortcoming remains that ‘complacency’ is a judgemental label, albeit one that is convenient shorthand. Terminology that recognised the full spectrum of human performance would be better.

The identification and assessment of potential threats and the monitoring for their development are valid and distinct skills to develop and behaviours to assess.

Success breeds complacency, complacency breeds failure, only the paranoid survive.

Andrew S Grove, Former CEO and Chairman of Intel

UPDATE 18 February 2018: Autopilot, Mind Wandering (MW), and the Out Of The Loop (OOTL) Performance Problem. According to researchers from ONERA and CNRS:

The OOTL phenomenon has been involved in many accidents in safety-critical industries, as demonstrated by papers and reports that we have reviewed. In the near future, the massive use of automation in everyday systems will reinforce this problem. MW may be closely related to OOTL—both involve removal from the task at hand, perception drop, and understanding problems. More importantly, their relation to vigilance decrement and working memory could be the heart of their interactions. Still, the exact causal link remains to be demonstrated. Far from being anecdotal, such a link would allow OOTL research to use theoretical and experimental understanding accumulated on MW. The large range of MW markers could be used to detect OOTL situations and help us to understand the underlying dynamics. On the other hand, designing systems capable of detecting and countering MW might highlight the reason why we all mind wander. Eventually, the expected outcome is a model of OOTL–MW interactions which could be integrated into autonomous systems.

UPDATE 20 February 2018: BSEE, the US offshore regulator, publishes Safety Bulletin 010 “Complacency in Aviation is Everyone’s Challenge” that discusses a flight requested to wrong offshore location, take off with tie downs still installed and walking past a tail rotor.

UPDATE 21 February 2018: Complacency and distraction are discussed on our article Flawed Post-Flight and Pre-Flight Inspections Miss Propeller Damage. We look at an Australian Transport Safety Bureau (ATSB) report into a Regional Express (Rex) Saab 340B incident in 2013 and in particular why propeller damage was missed on several inspections.

UPDATE 15 July 2018: HF Lessons from an AS365N3+ Gear Up Landing

UPDATE 17 November 2018: Investigation into F-22A Take Off Accident Highlights a Cultural Issue

Aerossurance is pleased to sponsor the 9th European Society of Air Safety Investigators (ESASI) Regional Seminar in Riga, Latvia 23 and 24 May 2018.

Recent Comments