NASA Challenger Launch Decision

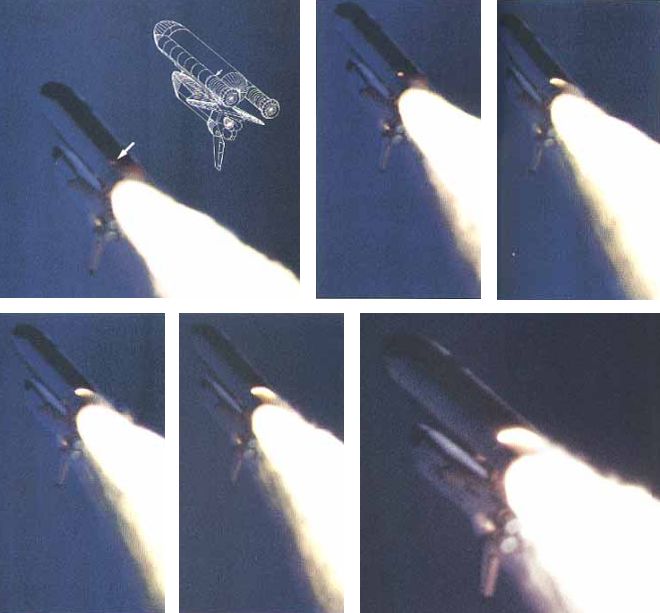

On the evening of Monday 27 January 1986 34 people in three locations were preparing for what we now know as one of the most infamous telephone conferences in history. The call had been prompted by a weather forecast that predicted the temperature would drop to 22°F (-5.5ºC) overnight. This group was just one of a number that night who had to decide if this would affect an event planned for 09:38 the next day. For at that time NASA planned to launch the Space Shuttle Challenger on flight STS-51L, with seven people aboard, from Kennedy Space Centre (the 25th Shuttle launch). Seventy three seconds after launch, travelling at over Mach 3 at an altitude of 10.4 miles the vehicle exploded.

We will look at this telecon and in particular the conclusions of a study by sociologist Prof Diane Vaughan, from her book: The Challenger Launch Decision, published on the 10th anniversary of the disaster, and other selected viewpoints on the disaster.

The Circumstances

The presidentially appointed Rogers Commission was tasked with investigating the accident. Over time the technical cause emerged. In their report the Commission identified that the low temperatures had compromised the reaction of rubber ‘O’ rings that needed to move to seal the segments of the vehicle’s Solid Rocket Boosters (SRBs sometimes called SRMs: Solid Rocket Motors) as the casing swelled under pressure of the rocket gases.

The slow reaction of the stiffened ‘O’ rings has allowed hot combustion gases to erode the ‘O’ rings and ultimately, these gases escaped, causing a catastrophic explosion of the large external fuel tank.

Commission member and maverick physicist Richard Feynman’s demonstration of the stiffening of an ‘O’ ring at low temperatures with a cup of ice water, an ‘O’ ring and a C-clamp has entered popular folklore.

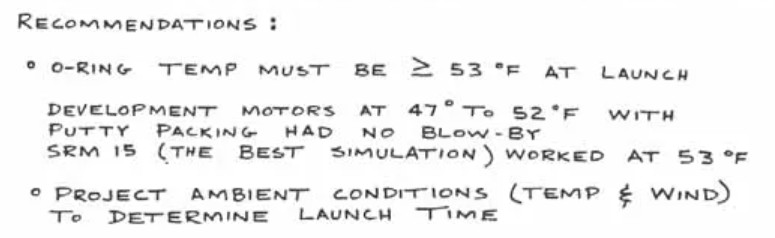

The affect of cold had been recognised before. NASA and engineers at Morton Thiokol, the SRB contractor, had been examining anomalies in ‘O’ ring performance from previous launches. Concerned that the launch was outside past launch experience and suspecting cold was a factor, when the forecast came through Morton Thiokol made a “no launch” recommendation. This was something they had never done before.

The infamous telephone conference was to discuss that recommendation with the NASA SRB specialists and managers. The data they faxed to NASA to make their case was the same data they had previously used to support earlier launch recommendations, even though they now proposed a new temperature limitation.

Not only did that inconsistency draw NASA challenge, but blow-by on a 75ºF launch seemed to undermine the claimed correlation. In the face of questions from NASA, the Morton Thiokol team in Utah asked for a brief off-line caucus to gather their thoughts. Unbeknown to participants at the Kennedy Space Centre and elsewhere, senior managers at Morton Thiokol decided to reverse that recommendation (one famously having been told to “take off your engineering hat and put on your management one”) without seeking a consensus with their engineers.

Statistician Edward Tufte has highlighted that if all ‘O’ ring performance had been presented, a different decision would likely have been made. However, it is unclear how much of this data had been collated in one place at that time.

Ironically the telecon was 19 years to the day since three astronauts of Apollo 204 (aka Apollo 1) died in a launch pad ground test fire. Many media commentators have speculated on overt or perceived NASA HQ or White House pressure to launch (either due to a series of embarrassing launch delays or to be in orbit in time for a live TV link up during the President’s State of the Union address due later that day). No such pressure was ever proven.

Others contended that Morton Thiokol senior management were more concerned with the affect a no-launch recommendation would have on a bid for a new SRB contract.

In the excellent account Truth, Lies and ‘O’ Rings Allan McDonald reports on various unhelpful and untruthful behaviours by some NASA and Morton Thiokol personnel in the days and months after the disaster. Morton Thiokol’s most senior representative at the Kennedy Space Centre that night, McDonald, who continued to support the no launch recommendation (due to the ‘O’ rings and two other reasons discussed in the first video below), was the man who brought the telecon to the Rogers Commission attention.

While obfuscating behaviour is disappointing the really interesting behaviour is what happened in the run up to the launch. This is what Diane Vaughan’s research sheds light on.

Diane Vaughan’s Analysis

Vaughan originally choose to study the Challenger disaster because her background was in examining corporate misconduct. She anticipated, based on early press coverage of the Rogers Commission, that malpractice influenced how the decision was taken. She describes her research as an ‘archaeological revisit’ or ‘historical ethnography’ as it involved retrospective review of the Rogers Commission report, 160 interviews done for the commission, her own interviews and 122,000 pages of NASA material. As one reviewer wrote:

One need not be a sociologist to appreciate Vaughan’s fine detective work in probing the culture in which the launch decision was made. She spent the better part of a decade immersed in the archival records…for an in-depth analysis of the context and behaviors that resulted in the ill-fated launch decision. [She found a] more subtle, more complex explanation of the decision that challenges some of the very conclusions she set out to validate. …Vaughan not only examined data from the critical twenty-four hours preceding the launch but also probed the multi-year history of shuttle launches and solid rocket booster technology.

As Vaughan describes:

To determine whether this case was an example of misconduct or not, I had decided on the following strategy: Rule violations were essential to misconduct, as I was defining it. The rule violations identified in Volume 1 [of the Rogers Commission Report] occurred not only on the eve of the launch, but on two occasions in 1985, and there were others before. I chose the three most controversial for in-depth analysis. I discovered that what I thought were rule violations were actions completely in accordance with NASA rules!

An example was where the Commission, were seen in video recordings of their hearings to interpret the use of the formal NASA Waiver process as arbitrary and reckless ‘waiving’ of requirements.

I had a possible alternative hypothesis – controversial decisions were not calculated deviance and wrongdoing, but normative to NASA insiders – and my first inkling about what eventually became one of the principle concepts in explaining the case: the normalization of deviance.

Vaughan found:

No one was playing ‘Russian roulette’; engineering analysis of damage and success of subsequent missions convinced them [crucially engineers and managers] that it was safe to fly. The repeating patterns were an indicator of culture – in this instance, the production of a cultural belief in risk acceptability. Thus, the ‘production of culture’ became my primary causal concept at the micro-level, explaining how they gradually accepted more and more erosion, making what I called ‘an incremental descent into poor judgment’. The question now was why.

Diane Vaughan’s Conclusions

Vaughan’s research identified three organisational factors that resulted in the disaster:

- Normalization of Deviance: by which in-service experience was rationalised and became accepted as normal via the evolving work group culture, i.e. the ‘production of culture’ (values and norms).

- The Culture of Production: where the drumbeat of the launch schedule and budgetary pressures both drove and constrained activity: “Within the culture of production, cost/schedule/safety compromises were normal and non-deviant for managers and engineers alike.”

- Structural Secrecy: where indirect or siloed information flow obscured information and constrained understanding, with detail being stripped away as issues were communicated up the hierarchy. With the benefit of hindsight “…outsiders saw O-ring damage as a strong signal of danger that was ignored, but for insiders each incident was part of an ongoing stream of decisions that affected its interpretation”, among many other issues in a complex system. They saw signals that were mixed (i.e. inconsistent service experience), weak and normalised, thus diminishing their significance.

As one book review commented (emphasis added):

Vaughan’s book is not an expose of bureaucratic wrong-doing. Rather, it is a detailed analysis of how decision-making occurs in organizations, and how even rigorous internal and external monitoring will not avert disaster; indeed, the chilling conclusion she draws is that wrong decisions will be made not in spite of but because of rules and procedures. In this respect, The Challenger Launch Decision is a sobering analysis of the consequences of increasing dependence on complex technologies.

Vaughan says:

No one factor alone was sufficient, but in combination the three comprised a theory explaining NASA’s history of flying with known flaws.

Ironically Vaughan also believes some of these factors affected the Commission’s investigation, as did a hindsight bias that skewed and narrowed the Commission’s attention. One source of further, more general, background on the culture of NASA is Howard McCurdy’s book: Inside NASA: High Technology and Organizational Change in the U.S. Space Program: High Technology and Organizational Change in the American Space Program

After Challenger

Shuttle operations were suspended for almost three years and the SRB field joint redesigned. In the final chapter Vaughan commented that while risk mitigating actions and improvements had taken place, in 1996…:

NASA is again experiencing the economic strain that prevailed at the time of the disaster. Few of the people in top NASA administrative positions exposed to the lessons of the Challenger tragedy are still there. The new leaders stress safety, but they are fighting for dollars and making budget cuts. History repeats, as economy and production are again priorities.

Probably the greatest tragedy is that ultimately the organisational factors that contributed to the Challenger disaster conspired in the re-entry loss of Shuttle Columbia in 2003.

The Columbia Investigation

In the resulting investigation by the Columbia Accident Investigation Board (CAIB), under Admiral Hal Gehman, Diane Vaughan was initially an expert witness and later a researcher developing chapters on the organisational factors behind Columbia. In a new preface to a re-issue of her book, Vaughan “reveals the ramifications for this book and for her when a similar decision-making process brought down NASA’s Space Shuttle Columbia in 2003”. Shuttle operations ceased in 2011.

Safety Resources

We highly recommend this case study: ‘Beyond SMS’ by Andy Evans (our founder) & John Parker in Flight Safety Foundation, AeroSafety World, May 2008, which discusses the importance of leadership in influencing culture. You may also be interested in these Aerossurance articles:

- How To Develop Your Organisation’s Safety Culture positive advice on the value of safety leadership and an aviation example of safety leadership development.

- How To Destroy Your Organisation’s Safety Culture a cautionary tale of how poor leadership and communications can undermine safety.

- Safety Intelligence & Safety Wisdom

- HROs and Safety Mindfulness

- Consultants & Culture: The Good, the Bad and the Ugly

As Aerossurance’s Andy Evans notes in this co-written article: Safety Performance Listening and Learning – AEROSPACE March 2017:

Organisations need to be confident that they are hearing all the safety concerns and observations of their workforce. They also need the assurance that their safety decisions are being actioned. The RAeS Human Factors Group: Engineering (HFG:E) set out to find out a way to check if organisations are truly listening and learning.

The result was a self-reflective approach to find ways to stimulate improvement.

UPDATE 26 April 2016: Chernobyl: 30 Years On – Lessons in Safety Culture

UPDATE 28 August 2016: Safety Intelligence & Safety Wisdom

UPDATE 2 September 2016: 10 Year Anniversary: Loss of RAF Nimrod MR2 XV230

UPDATE 9 November 2016: USMC CH-53E Readiness Crisis and Mid Air Collision Catastrophe

UPDATE 24 January 2018: RCAF Production Pressures Compromised Culture

UPDATE 26 January 2019: MC-12W Loss of Control Orbiting Over Afghanistan: Lessons in Training and Urgent Operational Requirements

UPDATE 28 January 2021: The lessons learned from the fatal Challenger shuttle disaster echo at NASA 35 years on

UPDATE 9 August 2025: OceanGate Titan: Toxic Culture & Fatal Hubris

UPDATE 20 September 2025: Cold Comfort Conference Call: USAF F-35A Alaska Accident